Generative AI

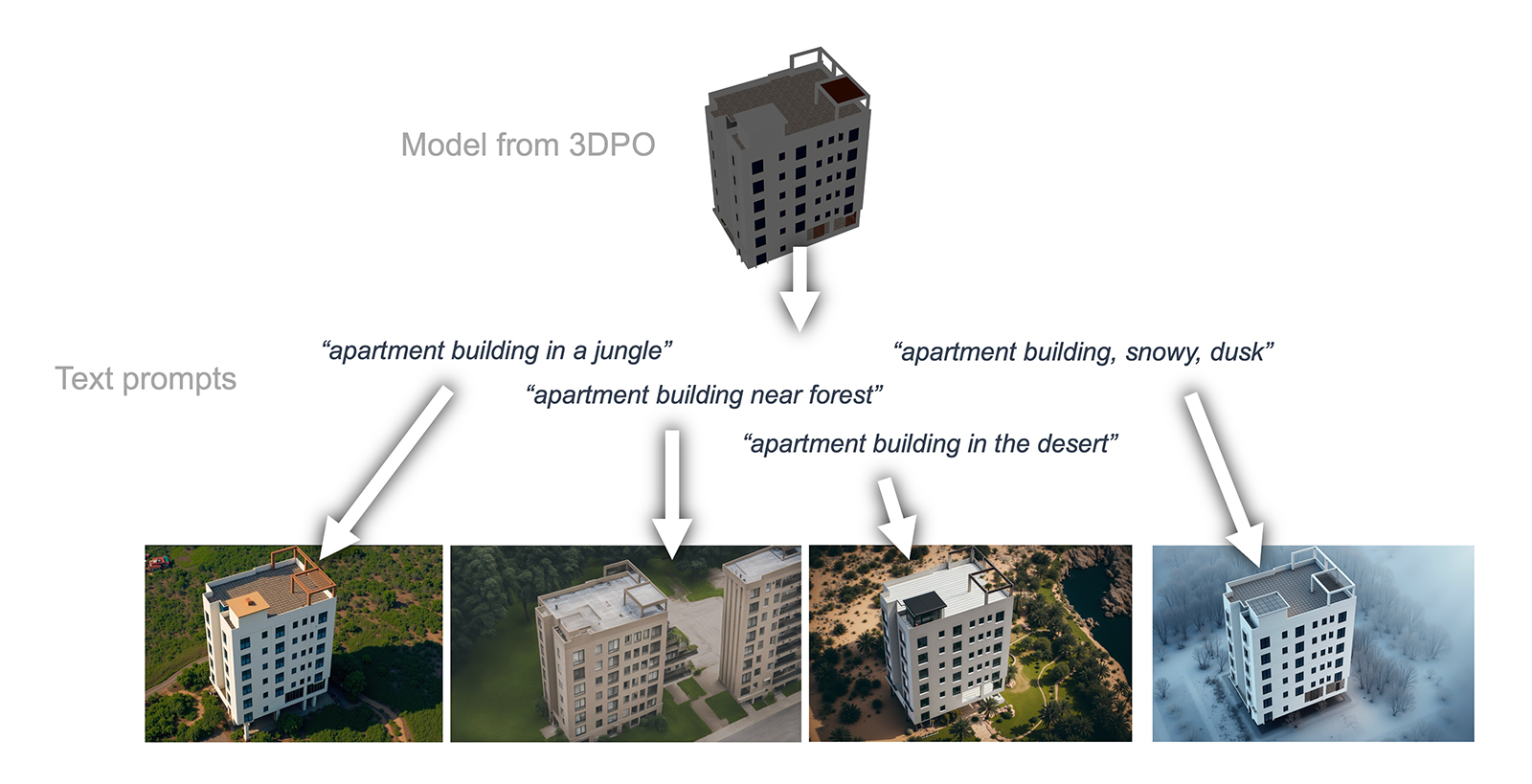

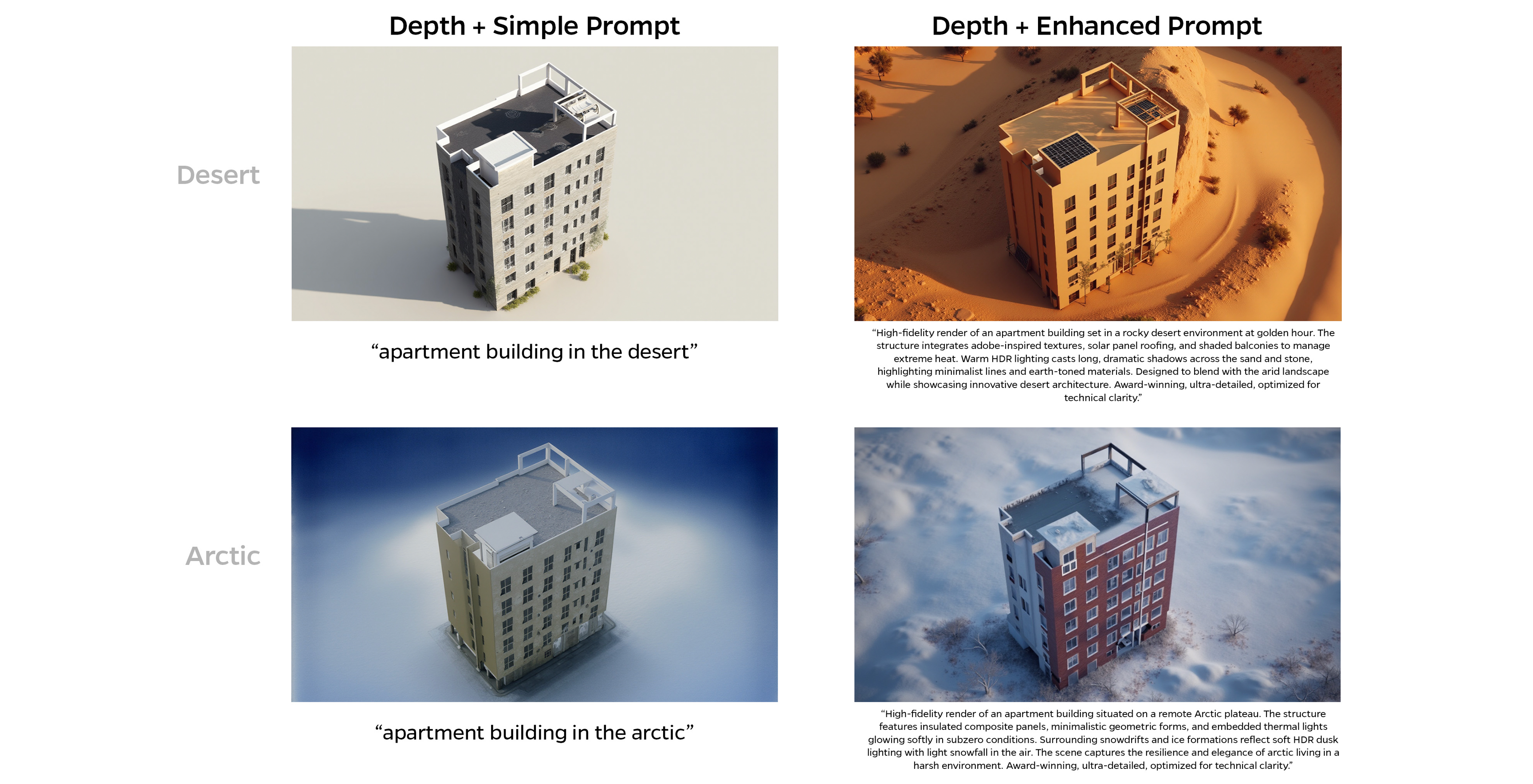

Since 2022, I've managed generative AI infrastructure for the Communications department at Johns Hopkins Applied Physics Laboratory, maintaining GPU-accelerated installations of tools like Invoke AI and Kokoro for our group of 50+ designers and 100+ communication specialists. This has enabled projects that previously took weeks to complete in days, producing hundreds of images at a fraction of the normal timeline.

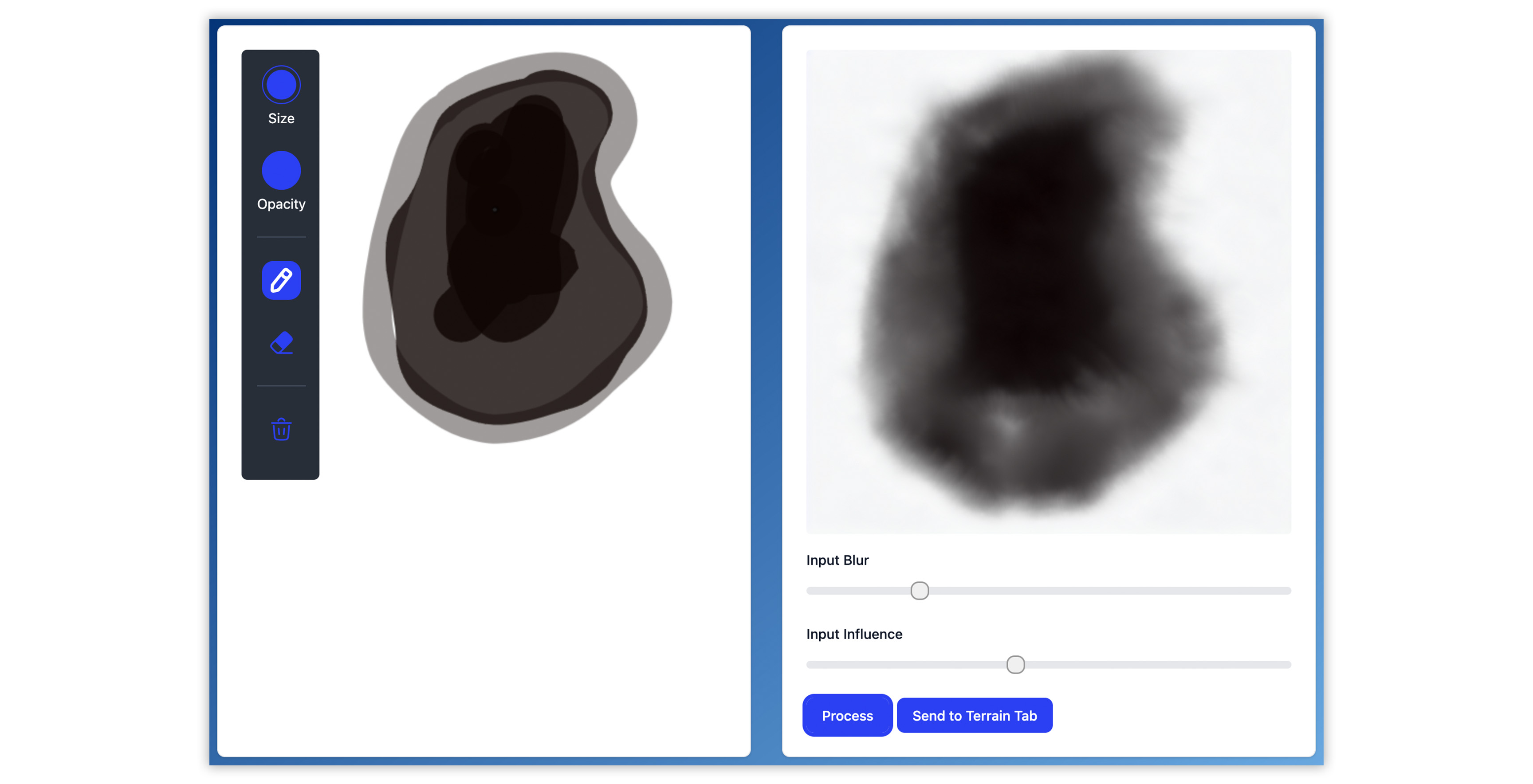

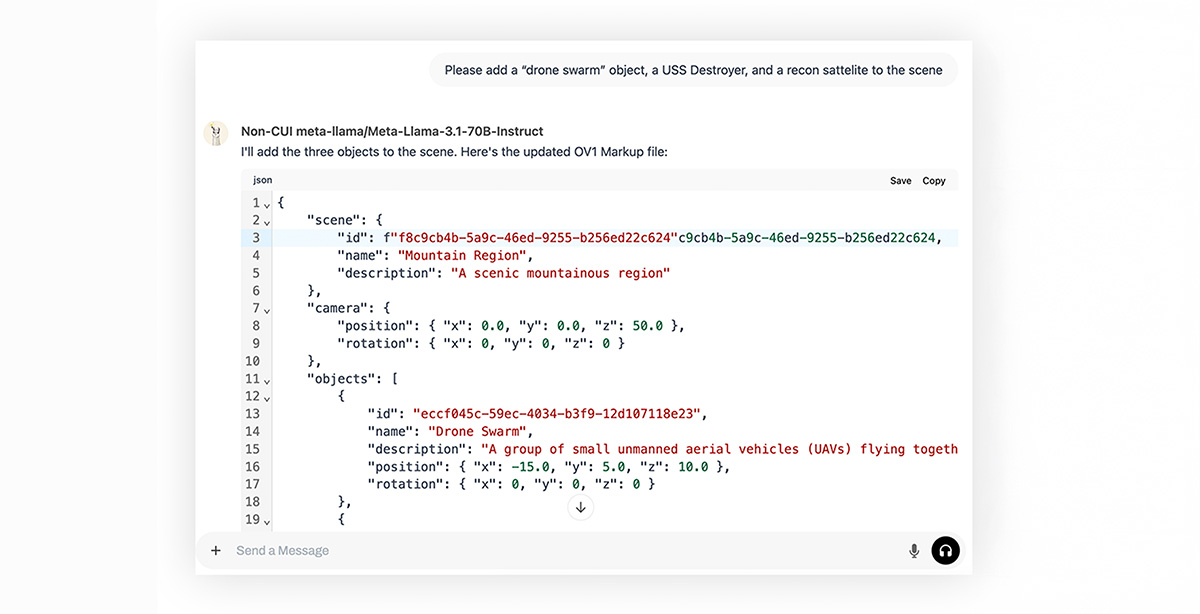

I work with these tools daily while developing comprehensive training programs, creating hours of instructional materials and speaking at panels and training events across the laboratory. My work spans technical implementation (GPU management, ControlNet based workflows, API integration) and practical application using on-prem hardware. Currently, I'm also serving as the lead frontend engineer on an LLM-powered war gaming simulation system. The projects below showcase the publicly releasable portion of these efforts.